最新 Kubernetes 集群部署 + Containerd容器运行时 + flannel 网络插件(保姆级教程,最新 K8S 1.28.2 版本)

创始人

2024-11-10 11:06:46

资源列表

| 操作系统 | 配置 | 主机名 | IP | 所需插件 |

|---|---|---|---|---|

| CentOS 7.9 | 2C4G | k8s-master | 192.168.60.143 | flannel-cni-plugin、flannel、coredns、etcd、kube-apiserver、kube-controller-manager、kube-proxy、 kube-scheduler 、containerd、pause 、crictl |

| CentOS 7.9 | 2C4G | k8s-node01 | 192.168.60.144 | flannel-cni-plugin、flannel、kubectl、kube-proxy、containerd、pause 、crictl、kubernetes-dashboard |

| CentOS 7.9 | 2C4G | k8s-node02 | 192.168.60.145 | flannel-cni-plugin、flannel、kubectl、kube-proxy、containerd、pause 、crictl、kubernetes-dashboard |

各服务版本

- flannel-cni-plugin:v1.1.2

- flannel:v0.21.5

- coredns:v1.10.1

- etcd:3.5.9-0

- kube-apiserver:v1.28.0

- kube-controller-manager:v1.28.0

- kube-proxy:v1.28.0

- kube-scheduler:v1.28.0

- pause:3.9

- containerd:1.6.33-3.1.el7

- crictl:1.6.33

1 环境准备(三台机器均需执行)

1.1 分别修改各个主机名称

## master 节点执行:192.168.60.143 $ hostnamectl --static set-hostname k8s-master ## node1 节点执行:192.168.60.144 $ hostnamectl --static set-hostname k8s-node1 ## node2 节点执行:192.168.60.145 $ hostnamectl --static set-hostname k8s-node2 ## 执行以上操作后,再重启服务器 $ reboot -f 1.2 关闭防火墙和禁用 selinux

## 禁用selinux,关闭内核安全机制 $ sudo sestatus && sudo setenforce 0 && sudo sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config ## 关闭防火墙,并禁止自启动 $ sudo systemctl stop firewalld && sudo systemctl disable firewalld && sudo systemctl status firewalld 1.3 关闭交换分区

kubeadm不支持swap

# 临时关闭 $ sudo swapoff -a # 永久关闭 $ sudo sed -i '/swap/s/^/#/' /etc/fstab 1.4 集群机器均绑定 hostname

(注意要跟 1.1 设置的 hostname 名称保持一致)

$ cat >> /etc/hosts << EOF 192.168.60.143 k8s-master 192.168.93.144 k8s-node01 192.168.93.145 k8s-node02 EOF 1.5 服务器内核优化

在Docker的使用过程中有时会看到下面这个警告信息,做以下操作即可:- WARNING: bridge-nf-call-iptables is disabled

- WARNING: bridge-nf-call-ip6tables is disabled

# 这种镜像信息可以通过配置内核参数的方式来消除 $ cat >> /etc/sysctl.conf << EOF # 启用ipv6桥接转发 net.bridge.bridge-nf-call-ip6tables = 1 # 启用ipv4桥接转发 net.bridge.bridge-nf-call-iptables = 1 # 开启路由转发功能 net.ipv4.ip_forward = 1 # 禁用swap分区 vm.swappiness = 0 EOF ## # 加载 overlay 内核模块 $ modprobe overlay # 往内核中加载 br_netfilter模块 $ modprobe br_netfilter # 加载文件内容 $ sysctl -p 1.6 设置 CenOS 基础 yum 源,安装必要命令插件

## 拉取并设置 yum 源 $ sudo curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo ## 快速建立元数据缓存 $ sudo yum makecache fast ## 安装必要系统命令插件 $ sudo yum -y install vim lrzsz unzip wget net-tools tree bash-completion telnet 1.7 各节点时间同步

## 安装同步时间插件 $ yum -y install ntpdate ## 同步阿里云的时间 $ ntpdate ntp.aliyun.com 2 Containerd 环境部署(三台机器均需执行)

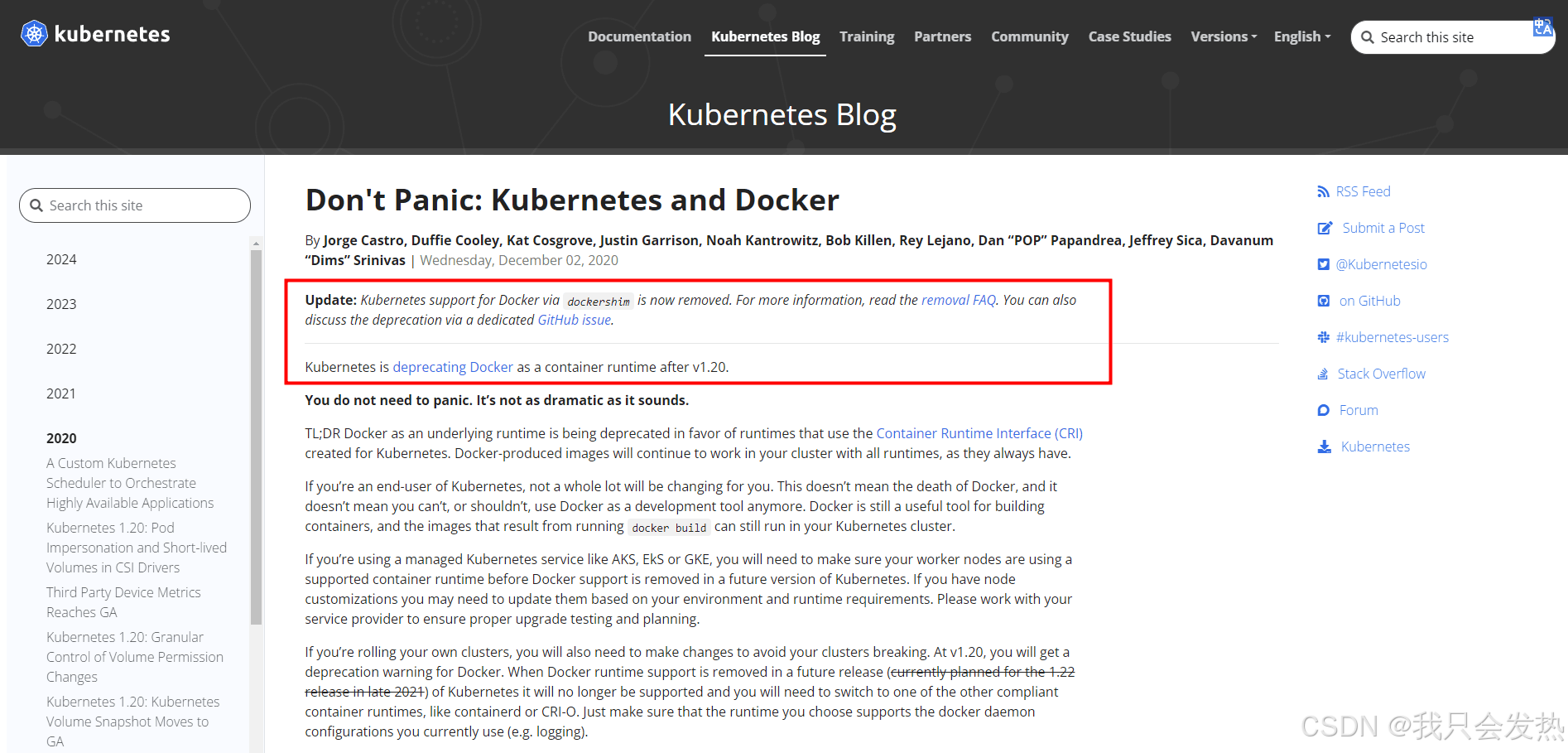

本文使用的是 Containerd 容器运行时,镜像操作是 crictl 和 ctr 命令行;顺便说一下,k8s 是 1.20 版本开始宣布即将取消 docker 作为默认部署容器,一直到 1.24 版本才正式取消。所以安装的时候一定要注意 k8s 版本 和 容器之间的差异 。以下是官网关于容器支持的通知(官网中文版的没有这段话,只有英文版本才有,很NT):

2.1 安装Containerd

## 添加 docker 源,containerd也在docker源内的 $ cat <2.2 配置 Containerd 镜像加速

$ mkdir -p /etc/containerd $ containerd config default | sudo tee /etc/containerd/config.toml ## # 修改/etc/containerd/config.toml文件中sandbox_image的值,改为国内源 $ vi /etc/containerd/config.toml 1 ) 设置 sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9" 2 ) 在 [plugins."io.containerd.grpc.v1.cri".registry.mirrors] 后面新增以下两行内容,大概在 153 行左右 [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"] endpoint = ["https://i9h06ghu.mirror.aliyuncs.com"] ## 启动 Containerd ,并设置开机自启动 $ systemctl start containerd && systemctl enable containerd 3 部署Kubernetes集群(具体在哪些服务器操作,下文副标题都有注明)

3.1 配置 kubernetes 的 yum 源(三台机器均需执行)

$ sudo cat < /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF 3.2 安装Kubernetes基础服务及工具(三台机器均需执行)

- kubeadm:用来初始化集群的指令。

- kubelet:在集群中的每个节点上用来启动 Pod 和容器等。

- kubectl:用来与集群通信的命令行工具。

## 安装所需 Kubernetes 必要插件 ## $ yum install -y kubelet kubeadm kubectl ,我装的是 1.28.2 版本的 $ yum install -y kubelet-1.28.2 kubeadm-1.28.2 kubectl-1.28.2 $ systemctl start kubelet && systemctl enable kubelet 3.3 配置 crictl 工具(三台机器均需执行)

- crictl 是 CRI 兼容的容器运行时命令行接口。你可以使用它来检查和调试Kubernetes节点上的容器运行时和应用程序。crictl 和它的源代码在cri-tools 代码库;

- 更新到 Containerd 后,之前我们常用的docker命令也不再使用了,取而代之恶的分别是 crictl 和 ctr 两个命令行客户端;

- crictl 是遵循 CRI 接口规范的一个命令行工具,通常用它来检查和管理kubelet节点上的容器运行时和镜像;

- ctr 是 containerd 的一个客户端工具;

3.3.1 配置 crictl 配置文件

$ cat << EOF >> /etc/crictl.yaml runtime-endpoint: unix:///var/run/containerd/containerd.sock image-endpoint: unix:///var/run/containerd/containerd.sock timeout: 10 debug: false EOF 3.3.2 测试 crictl 工具是否可用

# 拉取一个 Nginx 镜像验证 crictl 是否可用 $ crictl pull nginx:latest Image is up to date for sha256:605c77e624ddb75e6110f997c58876baa13f8754486b461117934b24a9dc3a85 # 查看 nginx 镜像 $ crictl images | grep nginx IMAGE TAG IMAGE ID SIZE docker.io/library/nginx latest 605c77e624ddb 56.7MB 3.4 master节点生成初始化配置文件(master节点执行)

- Kubeadm提供了很多配置项,kubeadm配置在kubernetes集群中是存储在ConfigMap中的,也可将这些配置写入配置文件,方便管理复杂的配置项。kubeadm配置内容是通过kubeadm config命令写入配置文件的

- kubeadm config view:查看当前集群中的配置值

- kubeadm config print join-defaults:输出kubeadm join默认参数文件的内容

- kubeadm config images list:列出所需的镜像列表

- kubeadm config images pull:拉取镜像到本地

- kubeadm config upload from-flags:由配置参数生成ConfigMap

# 生成初始化配置文件,并输出到当前目录 $ kubeadm config print init-defaults > init-config.yaml # 执行上面的命令可能会出现类似这个提示,不用管,接着往下执行即可:W0615 08:50:40.154637 10202 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] # 编辑配置文件,以下有需要修改部分 $ vi init-config.yaml apiVersion: kubeadm.k8s.io/v1beta3 bootstrapTokens: - groups: - system:bootstrappers:kubeadm:default-node-token token: abcdef.0123456789abcdef ttl: 24h0m0s usages: - signing - authentication kind: InitConfiguration localAPIEndpoint: advertiseAddress: 192.168.60.143 # 修改此处为你 master 节点 IP 地址,我的是 192.168.60.143 bindPort: 6443 # 默认端口号即可 nodeRegistration: criSocket: unix:///var/run/containerd/containerd.sock imagePullPolicy: IfNotPresent name: k8s-master # 修改此处为你主节点的主机名,我的是 k8s-master taints: null --- apiServer: timeoutForControlPlane: 4m0s apiVersion: kubeadm.k8s.io/v1beta3 certificatesDir: /etc/kubernetes/pki clusterName: kubernetes controllerManager: {} dns: {} etcd: local: dataDir: /var/lib/etcd # 默认路径即可,etcd容器挂载到本地的目录 imageRepository: registry.aliyuncs.com/google_containers # 修改默认地址为国内地址,国外的地址无法访问 kind: ClusterConfiguration kubernetesVersion: 1.28.0 networking: dnsDomain: cluster.local serviceSubnet: 10.96.0.0/12 # 默认网段即可,service资源的网段,集群内部的网络 podSubnet: 10.244.0.0/16 # 注意:这个是新增的,Pod资源网段,需要与下面的pod网络插件地址一致 scheduler: {} 3.5 master节点拉取所需镜像(master节点执行)

# 根据指定 init-config.yaml 文件,查看初始化需要的镜像 $ kubeadm config images list --config=init-config.yaml ## 拉取镜像 $ kubeadm config images pull --config=init-config.yaml ## 查看拉取的镜像 $ crictl images 3.6 master节点初始化和网络配置(master节点执行)

( kubeadm init 初始化配置参数如下,仅做了解即可)

- –apiserver-advertise-address(string) API服务器所公布的其正在监听的IP地址

- –apiserver-bind-port(int32) API服务器绑定的端口,默认6443

- –apiserver-cert-extra-sans(stringSlice) 用于API Server服务证书的可选附加主题备用名称,可以是IP和DNS名称

- –certificate-key(string) 用于加密kubeadm-certs Secret中的控制平面证书的密钥

- –control-plane-endpoint(string) 为控制平面指定一个稳定的IP地址或者DNS名称

- –image-repository(string) 选择用于拉取控制平面镜像的容器仓库,默认k8s.gcr.io

- –kubernetes-version(string) 为控制平面选择一个特定的k8s版本,默认stable-1

- –cri-socket(string) 指定要连接的CRI套接字的路径

- –node-name(string) 指定节点的名称

- –pod-network-cidr(string) 知名Pod网络可以使用的IP地址段,如果设置了这个参数,控制平面将会为每一个节点自动分配CIDRS

- –service-cidr(string) 为服务的虚拟IP另外指定IP地址段,默认 10.96.0.0/12

- –service-dns-domain(string) 为服务另外指定域名,默认 cluster.local

- –token(string) 用于建立控制平面节点和工作节点之间的双向通信

- –token-ttl(duration) 令牌被自动删除之前的持续时间,设置为0则永不过期

- –upload-certs 将控制平面证书上传到kubeadm-certs Secret

(kubeadm通过初始化安装是不包括网络插件的,也就是说初始化之后不具备相关网络功能的,比如k8s-master节点上查看信息都是“Not Ready”状态、Pod的CoreDNS无法提供服务等 若初始化失败执行:kubeadm reset、rm -rf $HOME/.kube、/etc/kubernetes/、/var/lib/etcd/)

3.6.1 使用 kubeadm 在 master 节点初始化k8s(master节点执行)

kubeadm 安装 k8s,这个方式安装的集群会把所有组件安装好,也就免去了需要手动安装 etcd 组件的操作

## 初始化 k8s ## 所有关于 k8s 初始化的配置,在上文已经修改完成了,执行以下命令即可 $ kubeadm init --config=init-config.yaml 3.6.2 初始化 k8s 成功的日志输出(master节点展示)

Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.60.143:6443 --token abcdef.0123456789abcdef \ --discovery-token-ca-cert-hash sha256:464fc74833ffce2ec83745db47d93e323ff47255c551197c949efc8ba6bcba36 3.6.3 master节点复制k8s认证文件到用户的home目录(master节点执行)

$ mkdir -p $HOME/.kube $ cp -i /etc/kubernetes/admin.conf $HOME/.kube/config $ chown $(id -u):$(id -g) $HOME/.kube/config 3.7 node 节点加入集群(两台从节点执行)

直接把k8s-master节点初始化之后的最后回显的token复制粘贴到node节点回车即可,无须做任何配置每个 master 最后回显的 token 和 sha 认证都不一样

$ kubeadm join 192.168.60.143:6443 --token abcdef.0123456789abcdef \ --discovery-token-ca-cert-hash sha256:464fc74833ffce2ec83745db47d93e323ff47255c551197c949efc8ba6bcba36 # 如果加入集群的命令找不到了可以在master节点重新生成一个 $ kubeadm token create --print-join-command 3.8 在master节点查看各个节点的状态(master节点执行)

前面已经提到了,在初始化 k8s-master 时并没有网络相关的配置,所以无法跟node节点通信,因此状态都是“Not Ready”。但是通过kubeadm join加入的node节点已经在k8s-master上可以看到。同理,目前 coredns 模块一直处于 Pending 也是正常状态。

## 查看节点信息 $ kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master NotReady master 44m v1.28.2 k8s-node1 NotReady 25m v1.28.2 k8s-node2 NotReady 25m v1.28.2 ## 查看主节点运行 Pod 的状态 $ kubectl get pods --all-namespaces -o wide NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES kube-system coredns-66f779496c-ccj8c 0/1 Pending 0 52m kube-system coredns-66f779496c-mvx6k 0/1 Pending 0 52m kube-system etcd-k8s-master 1/1 Running 0 52m 192.168.60.143 k8s-master kube-system kube-apiserver-k8s-master 1/1 Running 0 52m 192.168.60.143 k8s-master kube-system kube-controller-manager-k8s-master 1/1 Running 0 52m 192.168.60.143 k8s-master kube-system kube-proxy-8fbwr 1/1 Running 0 33m 192.168.60.145 k8s-node2 kube-system kube-proxy-h9xwc 1/1 Running 0 33m 192.168.60.144 k8s-node1 kube-system kube-proxy-rzdtk 1/1 Running 0 52m 192.168.60.143 k8s-master kube-system kube-scheduler-k8s-master 1/1 Running 0 52m 192.168.60.143 k8s-master 4 部署 flannel 网络插件(具体在哪些服务器操作,下文副标题都有注明)

4.1 下载 flannel 插件(三台机器均需执行)

- 按照其他博客的教程,这个插件是国外源,没梯子下不下来,我直接上传到 CSDN 资源

- 部署 flannel 必要插件:https://download.csdn.net/download/qq_23845083/89556119

- 资源包里包含 flannel-cni-plugin-v1.1.2.tar、flannel.tar、kube-flannel.yaml 三个资源,下文均用得到;

- 将这三个资源分别放到服务器中的任意文件夹内,我是放在 /home/soft 文件夹中;

4.2 加载 flannel 镜像(三台机器均需执行)

## 导入镜像,切记要在镜像包所在目录执行此命令 $ ctr -n k8s.io i import flannel-cni-plugin-v1.1.2.tar $ ctr -n k8s.io i import flannel.tar # 查看镜像 $ crictl images | grep flannel docker.io/flannel/flannel-cni-plugin v1.1.2 7a2dcab94698c 8.25MB docker.io/flannel/flannel v0.21.5 a6c0cb5dbd211 69.9MB 4.3 部署网络插件(master节点执行)

$ kubectl apply -f kube-flannel.yaml 4.4 从节点支持 kubectl 命令(两台从节点执行)

4.4.1 此时从节点执行 kubectl 命令会报错:(两台从节点执行)

- E0709 15:29:19.693750 97386 memcache.go:265] couldn’t get current server API group list: Get “http://localhost:8080/api?timeout=32s”: dial tcp [::1]:8080: connect: connection refused

- The connection to the server localhost:8080 was refused - did you specify the right host or port?

4.4.2 分析结果以及解决方法:(两台从节点执行)

- 原因是 kubectl 命令需要使用 kubernetes-admin 来运行

- 将主节点中的 /etc/kubernetes/admin.conf 文件拷贝到从节点相同目录下,然后配置环境变量

## 配置环境变量 $ echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile ## 立即生效 $ source ~/.bash_profile 4.5 查看各节点和组件状态(三台机器均可执行)

## 查看节点状态 $ kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready master 23m v1.28.2 k8s-node01 Ready 14m v1.28.2 k8s-node02 Ready 14m v1.28.2 ## 查看主节点运行 Pod 的状态 $ kubectl get pods --all-namespaces -o wide NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES kube-flannel kube-flannel-ds-7rzg7 1/1 Running 0 5m13s 192.168.60.145 k8s-node2 kube-flannel kube-flannel-ds-fxzg4 1/1 Running 0 5m13s 192.168.60.143 k8s-master kube-flannel kube-flannel-ds-gp45f 1/1 Running 0 5m13s 192.168.60.144 k8s-node1 kube-system coredns-66f779496c-ccj8c 1/1 Running 0 106m 10.244.0.2 k8s-master kube-system coredns-66f779496c-mvx6k 1/1 Running 0 106m 10.244.2.2 k8s-node2 kube-system etcd-k8s-master 1/1 Running 0 106m 192.168.60.143 k8s-master kube-system kube-apiserver-k8s-master 1/1 Running 0 106m 192.168.60.143 k8s-master kube-system kube-controller-manager-k8s-master 1/1 Running 0 106m 192.168.60.143 k8s-master kube-system kube-proxy-8fbwr 1/1 Running 0 87m 192.168.60.145 k8s-node2 kube-system kube-proxy-h9xwc 1/1 Running 0 87m 192.168.60.144 k8s-node1 kube-system kube-proxy-rzdtk 1/1 Running 0 106m 192.168.60.143 k8s-master kube-system kube-scheduler-k8s-master 1/1 Running 0 106m 192.168.60.143 k8s-master ## 查看指定pod状态 $ kubectl get pods -n kube-system NAME READY STATUS RESTARTS AGE coredns-7ff77c879f-25bzd 1/1 Running 0 23m coredns-7ff77c879f-wp885 1/1 Running 0 23m etcd-k8s-master 1/1 Running 0 24m kube-apiserver-k8s-master 1/1 Running 0 24m kube-controller-manager-k8s-master 1/1 Running 0 24m kube-proxy-2tphl 1/1 Running 0 15m kube-proxy-hqppj 1/1 Running 0 15m kube-proxy-rfxw2 1/1 Running 0 23m kube-scheduler-k8s-master 1/1 Running 0 24m ## 查看所有pod状态 $ kubectl get pods -A NAMESPACE NAME READY STATUS RESTARTS AGE kube-flannel kube-flannel-ds-h727x 1/1 Running 0 77s kube-flannel kube-flannel-ds-kbztr 1/1 Running 0 77s kube-flannel kube-flannel-ds-nw9pr 1/1 Running 0 77s kube-system coredns-7ff77c879f-25bzd 1/1 Running 0 24m kube-system coredns-7ff77c879f-wp885 1/1 Running 0 24m kube-system etcd-k8s-master 1/1 Running 0 24m kube-system kube-apiserver-k8s-master 1/1 Running 0 24m kube-system kube-controller-manager-k8s-master 1/1 Running 0 24m kube-system kube-proxy-2tphl 1/1 Running 0 15m kube-system kube-proxy-hqppj 1/1 Running 0 15m kube-system kube-proxy-rfxw2 1/1 Running 0 24m kube-system kube-scheduler-k8s-master 1/1 Running 0 24m ## 查看集群组件状态 $ kubectl get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-0 Healthy {"health":"true","reason":""} 5 部署 kubernetes-dashboard(master节点部署web页面)

5.1 配置 kubernetes-dashboard 并启动

## Step 1 :获取资源配置文件 $ wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc5/aio/deploy/recommended.yaml ## Step 2:编辑资源配置文件,大概定位到39行,修改其提供的service资源 $ vi recommended.yaml spec: type: NodePort # 新增的内容 ports: - port: 443 targetPort: 8443 nodePort: 31000 # 自行定义 web 访问端口号 selector: k8s-app: kubernetes-dashboard ## Step 3:部署pod应用 $ kubectl apply -f recommended.yaml ## Step 4:创建admin-user账户及授权的资源配置文件 $ cat>dashboard-adminuser.yml<5.2 登录验证

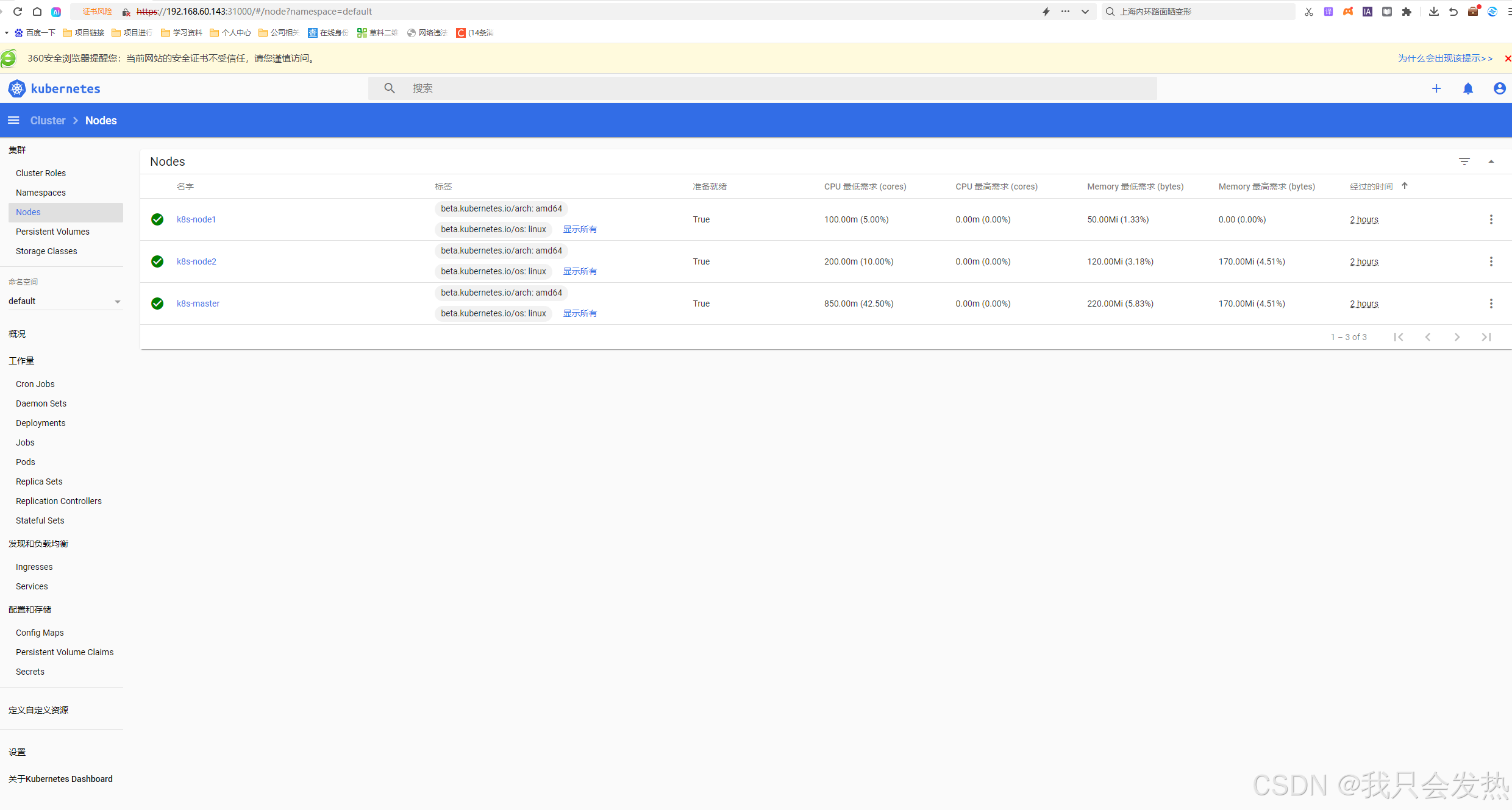

- 访问 master 节点的 IP 地址:https://192.168.60.143:31000,使用token登录即可

参考文章:https://blog.csdn.net/weixin_73059729/article/details/139695528

相关内容

热门资讯

裸辞做“一人公司”,我后悔了

去年这个时候,一位以色列程序员正在东南亚旅行。他顺手把一个在脑子里转了很久的想法做成了产品,一个让任...

南京建成国内首个Pre-6G试...

4月21日,2026全球6G技术与产业生态大会在南京开幕。全息互动技术展台前,一名远在北京的工作人员...

超梵求职受邀参加“2025抖音...

超梵求职受邀参加“2025抖音巨量引擎成人教育行业生态大会”,探讨分享优质内容传播,服务万千学员。 ...

摩托罗拉Razr 2026(R...

IT之家 4 月 22 日消息,摩托罗拉宣布新一代 Razr 折叠手机将于 4 月 29 日在美国发...

库克卸任,特纳斯领航:苹果新纪...

苹果首席执行官蒂姆·库克将卸任,硬件工程主管约翰·特纳斯将接任,苹果公司今天宣布此事。 库克将在夏季...